BYO AI means exactly that: Bring Your Own Artificial Intelligence

We have built Table of One to be fed to any AI of your choosing. There are however, some limitations and constraints here, namely: Not all AIs are equal.

Accessibility: A core objective of Table of One

One of the objectives of Table of One is to make the system as accessible to all users as possible. This has meant trying to build our system to work on the free-tier of any AI system. However, the issue here is that the AIs all have different limitations on how heavily you can lean on them.

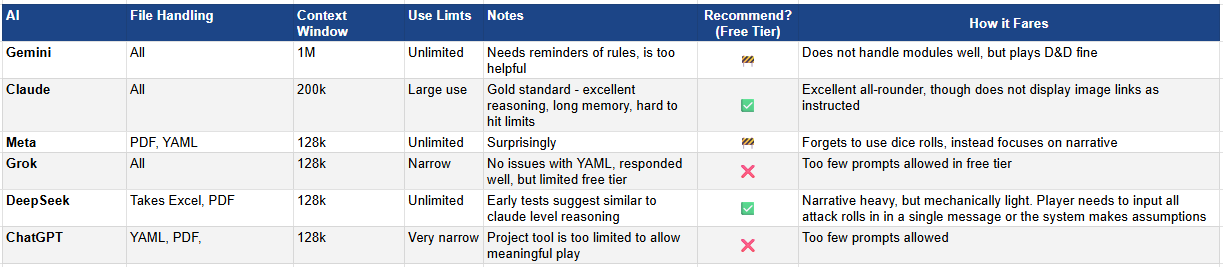

The below table summarises some of our learnings we have made along the way, as we go about testing the various AIs available, and whether we recommend them or not.

Winner: Claude

Second Place: Deep Seek

From our testing, while Claude does not provide image links as our rulesets and modules require it to, it does handle being a Dungeon Master very well mechanically, narratively and intuitively. The below image shows how the system could not access a Google Drive link so instead searched other images.

This indicates that module images require hosting publicly in order to be found, which is fine, but a mildly annoying consideration for module development.

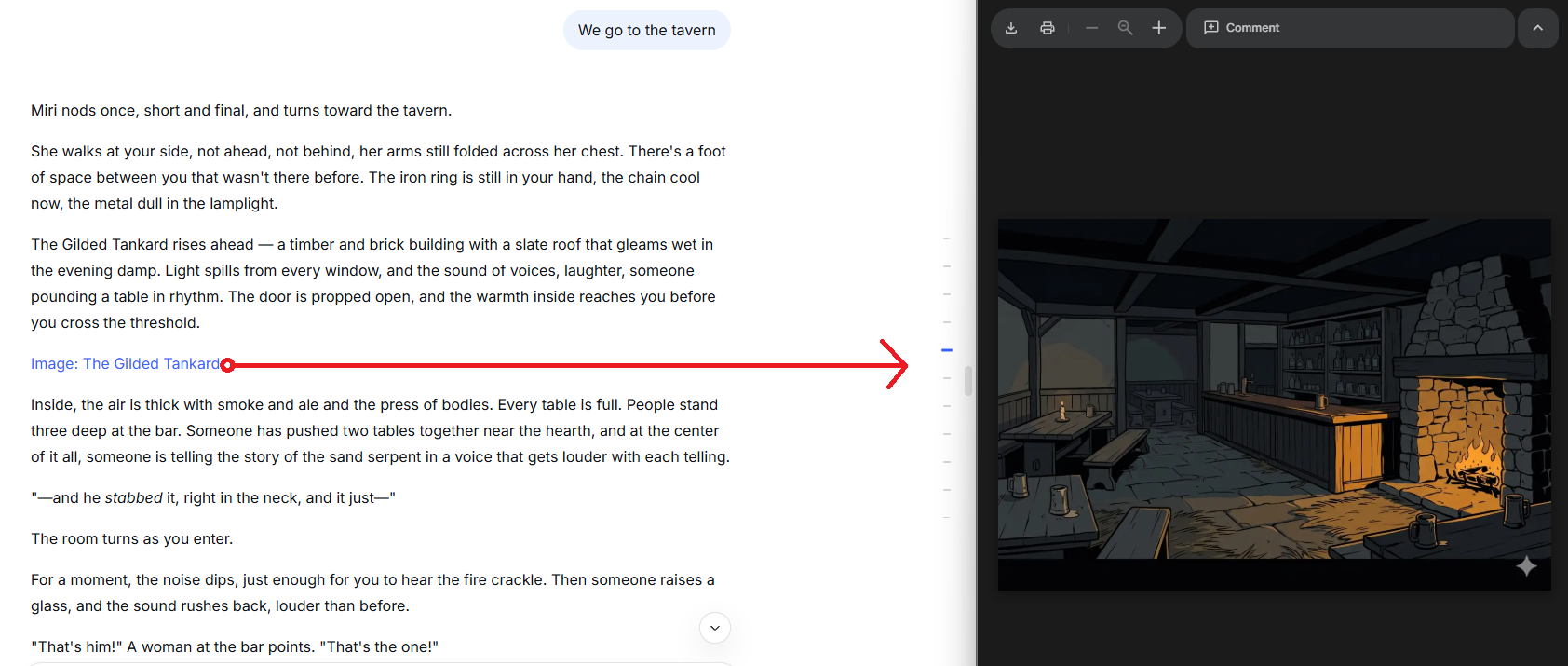

DeepSeek on the other hand, whilst not as mechanically proficient as Claude, handled narrative play perfectly well and gave links to Google Drive images without issues. DeepSeek was narratively immersive and wrote engaging character dialogue, set scenes up well, though combat was handled in a more narrative format with instant successes of every decision rather than rolling.

Less Than Ideal: Gemini & Meta.ai

Gemini meanwhile played mechanically very well, but struggled to follow the story module correctly, and did not deliver plot-hooks correctly, according to the story module instructions. The system has a 1,000,000 token context limit that has so much promise for D&D, given the significant context window should in theory provide it with outstanding ability to play and run a D&D campaign with a relatively long memory. However the inability to “connect the dots” on modules made this difficult. We will test at a later date combining all three modules into a single document and see how it handles that.

Meta was similar to DeepSeek in that it wrote excellent narrative, but failed at the mechanical aspects (forgetting to ask for dice rolls) and did not offer links to images.

Failures: Grok & ChatGPT

These two platforms performed very well technically, but their free-tier limits were so restrictive our testing has only allowed for playing around 15 minutes per session before we hit the limiter. We don’t recommend on the basis of accessibility.

(If you have a paid account to either of these platforms and would be happy to playtest for us, we would love to hear from you via out Contact Page)